GeoStyle: Discovering Fashion Trends and Events

Utkarsh Mall1 Kevin Matzen2 Bharath Hariharan1 Noah Snavely1 Kavita Bala1

1Cornell University 2Facebook

Abstract

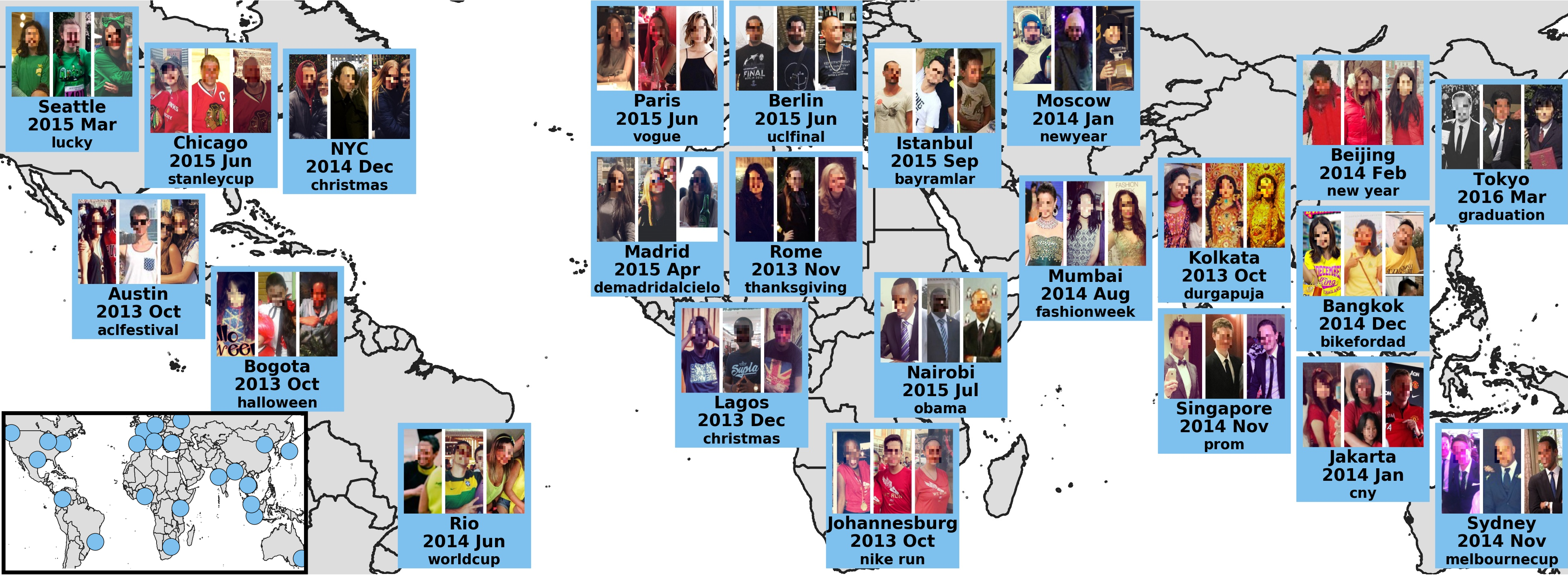

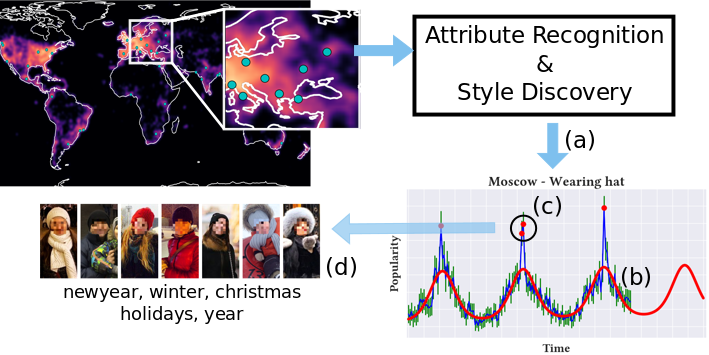

Understanding fashion styles and trends is of great potential interest to retailers and consumers alike. The photos people upload to social media are a historical and public data source of how people dress across the world and at different times. While we now have tools to automatically recognize the clothes and style attributes of what people are wearing in these photographs, we lack the ability to analyze spatial and temporal trends in these attributes or make predictions about the future. In this paper we address this need by providing an automatic framework (see the figure below) that analyzes large corpora of street imagery to (a) discover and forecast long-term trends of various fashion attributes as well as automatically discovered styles, and (b) identify spatio-temporally localized events that affect what people wear. We show that our framework makes long term trend forecasts that are > 20% more accurate than prior art, and identifies hundreds of socially meaningful events that impact fashion across the globe.

Paper

[pdf] [arxiv] [supplementary pdf]

Utkarsh Mall, Kevin Matzen, Bharath Hariharan, Noah Snavely and Kavita Bala. "GeoStyle: Discovering Fashion Trends and Events". In ICCV, 2019.

@inproceedings{geostyle2019,

title={{GeoStyle}: {D}iscovering fashion trends and events},

author={Mall, Utkarsh and Matzen, Kevin and Hariharan, Bharath and Snavely, Noah and Bala, Kavita},

booktitle={ICCV},

year={2019}

}

Code

Data

Acknowledgements

This work was supported by the National Science Foundation and an Amazon research award.

GeoStyle is licensed under a Creative Commons Attribution 4.0 International License.